!pip -q install langchain huggingface_hub transformers sentence_transformers accelerate bitsandbytesimport os

os.environ['HUGGINGFACEHUB_API_TOKEN'] = '<ENTER_HUGGING_FACE_API_KEY>'# text1 = "A common use case when generating images is to generate a batch of images, select one image and improve it with a better, more detailed prompt in a second run. To do this, one needs to make each generated image of the batch deterministic. Images are generated by denoising gaussian random noise which can be instantiated by passing a torch generator."

text1 = """

Once upon a time there was a dear little girl who was loved by every one who looked at her, but most of all by her grandmother, and there was nothing that she would not have given to the child. Once she gave her a little cap of red velvet, which suited her so well that she would never wear anything else. So she was always called Little Red Riding Hood.

One day her mother said to her, "Come, Little Red Riding Hood, here is a piece of cake and a bottle of wine. Take them to your grandmother, she is ill and weak, and they will do her good. Set out before it gets hot, and when you are going, walk nicely and quietly and do not run off the path, or you may fall and break the bottle, and then your grandmother will get nothing. And when you go into her room, don't forget to say, good-morning, and don't peep into every corner before you do it."

I will take great care, said Little Red Riding Hood to her mother, and gave her hand on it.

The grandmother lived out in the wood, half a league from the village, and just as Little Red Riding Hood entered the wood, a wolf met her. Little Red Riding Hood did not know what a wicked creature he was, and was not at all afraid of him.

"Good-day, Little Red Riding Hood," said he.

"Thank you kindly, wolf."

"Whither away so early, Little Red Riding Hood?"

"To my grandmother's."

"What have you got in your apron?"

"Cake and wine. Yesterday was baking-day, so poor sick grandmother is to have something good, to make her stronger."

"Where does your grandmother live, Little Red Riding Hood?"

"A good quarter of a league farther on in the wood. Her house stands under the three large oak-trees, the nut-trees are just below. You surely must know it," replied Little Red Riding Hood.

The wolf thought to himself, "What a tender young creature. What a nice plump mouthful, she will be better to eat than the old woman. I must act craftily, so as to catch both." So he walked for a short time by the side of Little Red Riding Hood, and then he said, "see Little Red Riding Hood, how pretty the flowers are about here. Why do you not look round. I believe, too, that you do not hear how sweetly the little birds are singing. You walk gravely along as if you were going to school, while everything else out here in the wood is merry."

Little Red Riding Hood raised her eyes, and when she saw the sunbeams dancing here and there through the trees, and pretty flowers growing everywhere, she thought, suppose I take grandmother a fresh nosegay. That would please her too. It is so early in the day that I shall still get there in good time. And so she ran from the path into the wood to look for flowers. And whenever she had picked one, she fancied that she saw a still prettier one farther on, and ran after it, and so got deeper and deeper into the wood.

Meanwhile the wolf ran straight to the grandmother's house and knocked at the door.

"Who is there?"

"Little Red Riding Hood," replied the wolf. "She is bringing cake and wine. Open the door."

"Lift the latch," called out the grandmother, "I am too weak, and cannot get up."

The wolf lifted the latch, the door sprang open, and without saying a word he went straight to the grandmother's bed, and devoured her. Then he put on her clothes, dressed himself in her cap, laid himself in bed and drew the curtains.

Little Red Riding Hood, however, had been running about picking flowers, and when she had gathered so many that she could carry no more, she remembered her grandmother, and set out on the way to her.

She was surprised to find the cottage-door standing open, and when she went into the room, she had such a strange feeling that she said to herself, oh dear, how uneasy I feel to-day, and at other times I like being with grandmother so much.

She called out, "Good morning," but received no answer. So she went to the bed and drew back the curtains. There lay her grandmother with her cap pulled far over her face, and looking very strange.

"Oh, grandmother," she said, "what big ears you have."

"The better to hear you with, my child," was the reply.

"But, grandmother, what big eyes you have," she said.

"The better to see you with, my dear."

"But, grandmother, what large hands you have."

"The better to hug you with."

"Oh, but, grandmother, what a terrible big mouth you have."

"The better to eat you with."

And scarcely had the wolf said this, than with one bound he was out of bed and swallowed up Little Red Riding Hood.

When the wolf had appeased his appetite, he lay down again in the bed, fell asleep and began to snore very loud. The huntsman was just passing the house, and thought to himself, how the old woman is snoring. I must just see if she wants anything.

So he went into the room, and when he came to the bed, he saw that the wolf was lying in it. "Do I find you here, you old sinner," said he. "I have long sought you."

Then just as he was going to fire at him, it occurred to him that the wolf might have devoured the grandmother, and that she might still be saved, so he did not fire, but took a pair of scissors, and began to cut open the stomach of the sleeping wolf.

When he had made two snips, he saw the Little Red Riding Hood shining, and then he made two snips more, and the little girl sprang out, crying, "Ah, how frightened I have been. How dark it was inside the wolf."

And after that the aged grandmother came out alive also, but scarcely able to breathe. Little Red Riding Hood, however, quickly fetched great stones with which they filled the wolf's belly, and when he awoke, he wanted to run away, but the stones were so heavy that he collapsed at once, and fell dead.

Then all three were delighted. The huntsman drew off the wolf's skin and went home with it. The grandmother ate the cake and drank the wine which Little Red Riding Hood had brought, and revived, but Little Red Riding Hood thought to herself, as long as I live, I will never by myself leave the path, to run into the wood, when my mother has forbidden me to do so.It is also related that once when Little Red Riding Hood was again taking cakes to the old grandmother, another wolf spoke to her, and tried to entice her from the path. Little Red Riding Hood, however, was on her guard, and went straight forward on her way, and told her grandmother that she had met the wolf, and that he had said good-morning to her, but with such a wicked look in his eyes, that if they had not been on the public road she was certain he would have eaten her up. "Well," said the grandmother, "we will shut the door, that he may not come in." Soon afterwards the wolf knocked, and cried, "open the door, grandmother, I am Little Red Riding Hood, and am bringing you some cakes." But they did not speak, or open the door, so the grey-beard stole twice or thrice round the house, and at last jumped on the roof, intending to wait until Little Red Riding Hood went home in the evening, and then to steal after her and devour her in the darkness. But the grandmother saw what was in his thoughts. In front of the house was a great stone trough, so she said to the child, take the pail, Little Red Riding Hood. I made some sausages yesterday, so carry the water in which I boiled them to the trough. Little Red Riding Hood carried until the great trough was quite full. Then the smell of the sausages reached the wolf, and he sniffed and peeped down, and at last stretched out his neck so far that he could no longer keep his footing and began to slip, and slipped down from the roof straight into the great trough, and was drowned. But Little Red Riding Hood went joyously home, and no one ever did anything to harm her again.

"""text1 = "Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems."from transformers import pipeline, AutoTokenizer, AutoModelForMaskedLM

import torch

def watermark_text(text, model_name="bert-base-uncased", offset=0):

# Clean and split the input text

text = " ".join(text.split())

words = text.split()

# Replace every fifth word with [MASK], starting from the offset

for i in range(offset, len(words)):

if (i + 1 - offset) % 5 == 0:

words[i] = '[MASK]'

# Initialize the tokenizer and model, move to GPU if available

device = 0 if torch.cuda.is_available() else -1

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForMaskedLM.from_pretrained(model_name).to(device)

# Initialize the fill-mask pipeline

classifier = pipeline("fill-mask", model=model, tokenizer=tokenizer, device=device)

# Make a copy of the words list to modify it

watermarked_words = words.copy()

# Process the text in chunks

for i in range(offset, len(words), 5):

chunk = " ".join(watermarked_words[:i+9])

if '[MASK]' in chunk:

try:

tempd = classifier(chunk)

except Exception as e:

print(f"Error processing chunk '{chunk}': {e}")

continue

if tempd:

templ = tempd[0]

temps = templ['token_str']

watermarked_words[i+4] = temps.split()[0]

# print("Done ", i + 1, "th word")

# Output the results

# print("Original Text:")

# print(text)

# print("Watermark Areas:")

# print(" ".join(words))

# print("Watermarked Text:")

# print(" ".join(watermarked_words))

return " ".join(watermarked_words)

# Example usage

text = "Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems."

watermark_text(text, offset=0)

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

'Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are impossible for classical computers. Unlike quantum computers, which use bits as the fundamental unit of , quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously according to the principles of symmetry and entanglement, providing a significant advantage in solving complex mathematical problems.'from transformers import pipeline, AutoTokenizer, AutoModelForMaskedLM

import torch

def watermark_text_and_calculate_matches(text, model_name="bert-base-uncased", max_offset=5):

# Clean and split the input text

text = " ".join(text.split())

words = text.split()

# Initialize the tokenizer and model, move to GPU if available

device = 0 if torch.cuda.is_available() else -1

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForMaskedLM.from_pretrained(model_name).to(device)

# Initialize the fill-mask pipeline

classifier = pipeline("fill-mask", model=model, tokenizer=tokenizer, device=device)

# Dictionary to store match ratios for each offset

match_ratios = {}

# Loop over each offset

for offset in range(max_offset):

# Replace every fifth word with [MASK], starting from the offset

modified_words = words.copy()

for i in range(offset, len(modified_words)):

if (i + 1 - offset) % 5 == 0:

modified_words[i] = '[MASK]'

# Make a copy of the modified words list to work on

watermarked_words = modified_words.copy()

total_replacements = 0

total_matches = 0

# Process the text in chunks

for i in range(offset, len(modified_words), 5):

chunk = " ".join(watermarked_words[:i+9])

if '[MASK]' in chunk:

try:

tempd = classifier(chunk)

except Exception as e:

print(f"Error processing chunk '{chunk}': {e}")

continue

if tempd:

templ = tempd[0]

temps = templ['token_str']

original_word = words[i+4]

replaced_word = temps.split()[0]

watermarked_words[i+4] = replaced_word

# Increment total replacements and matches

total_replacements += 1

if replaced_word == original_word:

total_matches += 1

# Calculate the match ratio for the current offset

if total_replacements > 0:

match_ratio = total_matches / total_replacements

else:

match_ratio = 0

match_ratios[offset] = match_ratio

# Return the match ratios for each offset

return match_ratios

# Example usage

text = "Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems."

# Calculate match ratios

match_ratios = watermark_text_and_calculate_matches(text, max_offset=5)

print(match_ratios)

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

{0: 0.5384615384615384, 1: 0.6153846153846154, 2: 0.5833333333333334, 3: 0.6666666666666666, 4: 0.5833333333333334}

from scipy.stats import ttest_1samp

import numpy as np

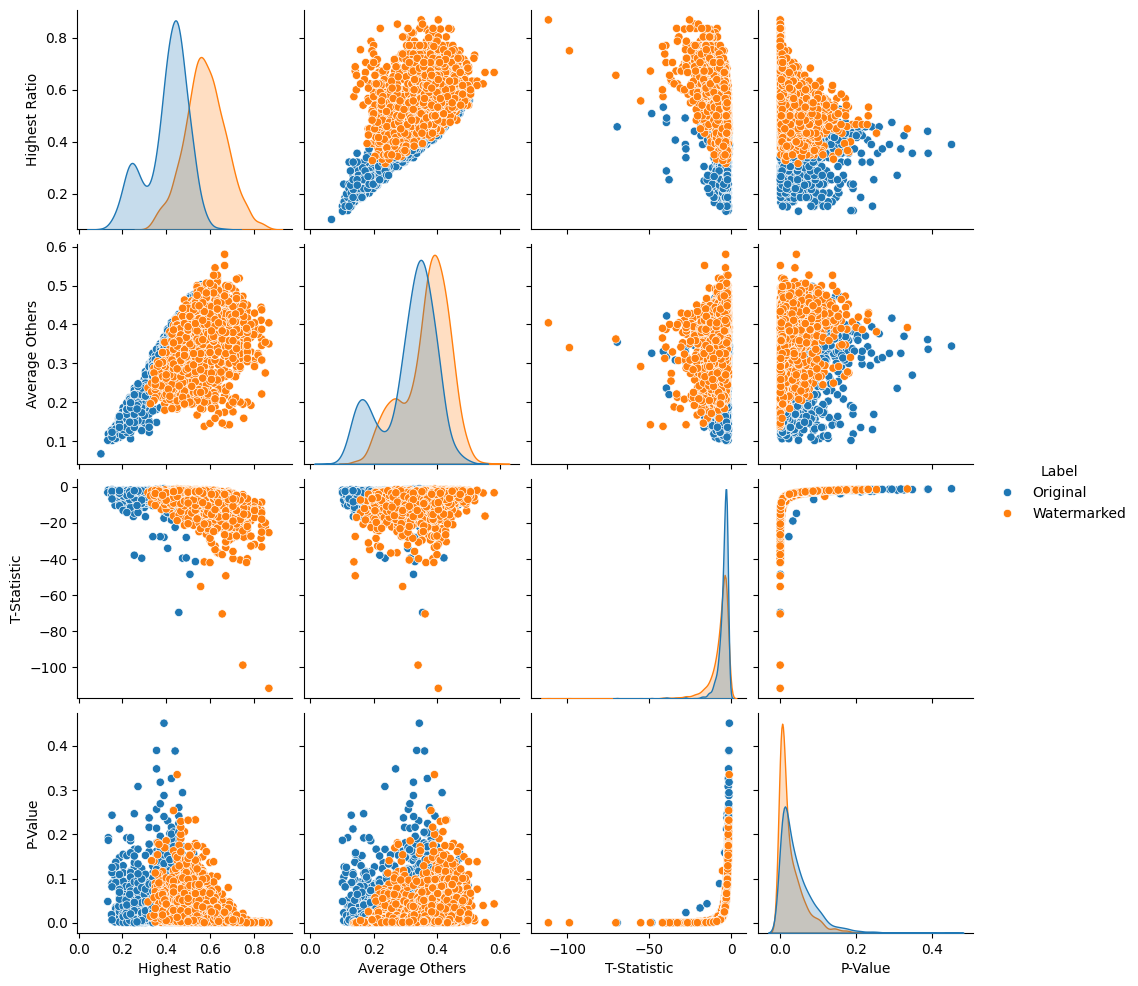

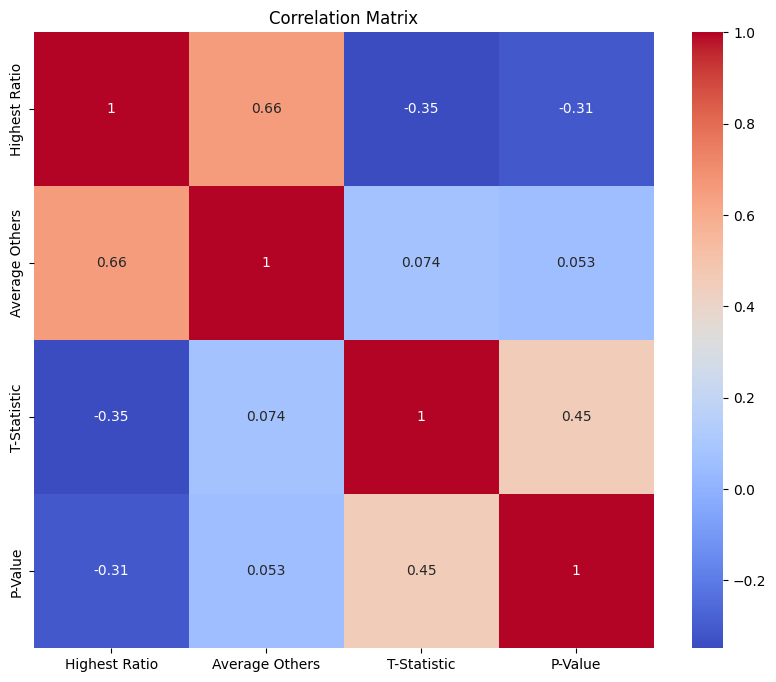

def check_significant_difference(match_ratios):

# Extract ratios into a list

ratios = list(match_ratios.values())

# Find the highest ratio

highest_ratio = max(ratios)

# Find the average of the other ratios

other_ratios = [r for r in ratios if r != highest_ratio]

average_other_ratios = np.mean(other_ratios)

# Perform a t-test to compare the highest ratio to the average of the others

t_stat, p_value = ttest_1samp(other_ratios, highest_ratio)

# Print the results

print(f"Highest Match Ratio: {highest_ratio}")

print(f"Average of Other Ratios: {average_other_ratios}")

print(f"T-Statistic: {t_stat}")

print(f"P-Value: {p_value}")

# Determine if the difference is statistically significant (e.g., at the 0.05 significance level)

if p_value < 0.05:

print("The highest ratio is significantly different from the others.")

else:

print("The highest ratio is not significantly different from the others.")

return [highest_ratio, average_other_ratios, t_stat, p_value]

# Example usage

text = "Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems."

# match_ratios = watermark_text_and_calculate_matches(text, max_offset=5)

# check_significant_difference(match_ratios)

import random

def randomly_add_words(text, words_to_add, num_words_to_add):

# Clean and split the input text

text = " ".join(text.split())

words = text.split()

# Insert words randomly into the text

for _ in range(num_words_to_add):

# Choose a random position to insert the word

position = random.randint(0, len(words))

# Choose a random word to insert

word_to_insert = random.choice(words_to_add)

# Insert the word at the random position

words.insert(position, word_to_insert)

# Join the list back into a string and return the modified text

modified_text = " ".join(words)

return modified_text

# Example usage

text = "Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems."

words_to_add = ["example", "test", "random", "insert"]

num_words_to_add = 5

# modified_text = randomly_add_words(text, words_to_add, num_words_to_add)

modified_text = randomly_add_words(watermark_text(text, offset=0), words_to_add, num_words_to_add)

print("Original Text:")

print(text)

print("\nModified Text:")

print(modified_text)

match_ratios = watermark_text_and_calculate_matches(modified_text, max_offset=5)

print(match_ratios)

check_significant_difference(match_ratios)Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Original Text:

Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems.

Watermark Areas:

Quantum computing is a [MASK] evolving field that leverages [MASK] principles of quantum mechanics [MASK] perform computations that are [MASK] for classical computers. Unlike [MASK] computers, which use bits [MASK] the fundamental unit of [MASK] quantum computers use quantum [MASK] or qubits. Qubits can [MASK] in multiple states simultaneously [MASK] to the principles of [MASK] and entanglement, providing a [MASK] advantage in solving complex [MASK] problems.

Watermarked Text:

Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are impossible for classical computers. Unlike quantum computers, which use bits as the fundamental unit of , quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously according to the principles of symmetry and entanglement, providing a significant advantage in solving complex mathematical problems.

Original Text:

Quantum computing is a rapidly evolving field that leverages the principles of quantum mechanics to perform computations that are infeasible for classical computers. Unlike classical computers, which use bits as the fundamental unit of information, quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously due to the principles of superposition and entanglement, providing a significant advantage in solving complex computational problems.

Modified Text:

Quantum computing is example a rapidly evolving field that leverages the principles of quantum mechanics to perform random computations that are impossible for classical computers. Unlike quantum computers, which use bits as the random insert fundamental unit of , quantum computers use quantum bits or qubits. Qubits can exist in multiple states simultaneously according random to the principles of symmetry and entanglement, providing a significant advantage in solving complex mathematical problems.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

{0: 0.5714285714285714, 1: 0.5714285714285714, 2: 0.5384615384615384, 3: 0.38461538461538464, 4: 0.7692307692307693}

Highest Match Ratio: 0.7692307692307693

Average of Other Ratios: 0.5164835164835164

T-Statistic: -5.66220858504931

P-Value: 0.010908789440745323

The highest ratio is significantly different from the others.

[0.7692307692307693,

0.5164835164835164,

-5.66220858504931,

0.010908789440745323]texts = [

"Artificial intelligence (AI) has seen remarkable advancements in recent years, transforming numerous industries. From healthcare to finance, AI technologies are being leveraged to improve efficiency and decision-making. In healthcare, AI algorithms are being used to analyze medical images, predict patient outcomes, and assist in surgery. Finance professionals are using AI for fraud detection, risk management, and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that AI systems are transparent and fair is critical for their continued adoption and trust. As AI continues to evolve, it is essential to consider both its potential benefits and challenges.",

"Climate change is one of the most pressing issues facing our planet today. Rising global temperatures, melting ice caps, and increasing frequency of extreme weather events are all indicators of this phenomenon. Scientists warn that without significant action to reduce greenhouse gas emissions, the effects of climate change will become more severe. Renewable energy sources such as solar, wind, and hydro power are being promoted as sustainable alternatives to fossil fuels. Additionally, individuals can make a difference by reducing their carbon footprint through actions like using public transportation, conserving energy, and supporting policies aimed at environmental protection.",

"The field of biotechnology is revolutionizing medicine and agriculture. Advances in genetic engineering have enabled scientists to develop crops that are resistant to pests and diseases, as well as produce higher yields. In medicine, biotechnology is being used to create personalized treatments based on an individual's genetic makeup. This approach, known as precision medicine, aims to provide more effective and targeted therapies for various diseases. However, the rapid pace of biotechnological innovation also raises ethical and regulatory questions. It is crucial to balance the benefits of these technologies with the potential risks and ensure that they are used responsibly.",

"Quantum computing is poised to revolutionize the world of computing. Unlike classical computers, which use bits to represent data as 0s and 1s, quantum computers use qubits, which can exist in multiple states simultaneously. This allows quantum computers to perform complex calculations much faster than their classical counterparts. Potential applications of quantum computing include cryptography, drug discovery, and optimization problems. However, building a practical and scalable quantum computer remains a significant challenge. Researchers are exploring various approaches, such as superconducting qubits and trapped ions, to overcome these hurdles and bring quantum computing closer to reality.",

"The internet of things (IoT) is transforming the way we interact with the world around us. IoT refers to the network of interconnected devices that collect and exchange data. These devices range from smart home appliances to industrial sensors, and their applications are vast. In the home, IoT devices can automate tasks like adjusting the thermostat, turning off lights, and monitoring security systems. In industry, IoT is used to optimize supply chains, monitor equipment health, and improve safety. However, the proliferation of IoT devices also raises concerns about security and privacy. Ensuring that these devices are secure and that data is protected is essential for the continued growth of IoT.",

"Renewable energy is gaining momentum as a viable solution to the world's energy needs. Solar, wind, and hydro power are among the most common forms of renewable energy, and they offer a sustainable alternative to fossil fuels. Solar power harnesses energy from the sun using photovoltaic cells, while wind power generates electricity through turbines. Hydropower uses the energy of flowing water to produce electricity. These technologies are being adopted at an increasing rate as countries seek to reduce their carbon emissions and transition to cleaner energy sources. The growth of renewable energy is not without challenges, including the need for improved energy storage solutions and the integration of these technologies into existing power grids.",

"The rise of e-commerce has transformed the retail industry. Online shopping has become increasingly popular, offering consumers convenience and a wide range of products at their fingertips. Major e-commerce platforms like Amazon, Alibaba, and eBay have disrupted traditional brick-and-mortar stores, leading to significant changes in consumer behavior. The COVID-19 pandemic further accelerated the shift to online shopping, as lockdowns and social distancing measures limited in-person shopping. While e-commerce offers many benefits, it also presents challenges, such as the need for efficient logistics and concerns about data privacy. As the industry continues to evolve, companies are exploring new technologies like augmented reality and artificial intelligence to enhance the online shopping experience.",

"Cybersecurity is a critical concern in today's digital age. With the increasing reliance on technology and the internet, the risk of cyberattacks has grown significantly. Cybercriminals use various methods, such as phishing, ransomware, and malware, to exploit vulnerabilities in systems and steal sensitive information. Organizations must implement robust cybersecurity measures to protect their data and infrastructure. This includes using encryption, multi-factor authentication, and regular security audits. Additionally, individuals can take steps to safeguard their personal information, such as using strong passwords and being cautious of suspicious emails. As cyber threats continue to evolve, staying informed and vigilant is essential for maintaining cybersecurity.",

"The field of robotics is advancing rapidly, with applications ranging from manufacturing to healthcare. Industrial robots are used to automate repetitive tasks, improve precision, and increase efficiency in manufacturing processes. In healthcare, robots assist in surgeries, rehabilitation, and patient care. Social robots are being developed to provide companionship and support for the elderly and individuals with disabilities. The integration of artificial intelligence and machine learning has further enhanced the capabilities of robots, enabling them to perform complex tasks and adapt to new situations. However, the rise of robotics also raises ethical and societal questions, such as the impact on employment and the need for responsible development and use of these technologies.",

"Space exploration has captured the imagination of humanity for centuries. Recent advancements in technology have made space missions more feasible and ambitious. Private companies like SpaceX and Blue Origin are playing a significant role in this new era of space exploration. SpaceX's successful launches and plans for Mars colonization have reignited interest in space travel. NASA and other space agencies are also focusing on missions to the Moon, Mars, and beyond. The development of new propulsion systems, space habitats, and life support technologies are critical for the success of these missions. While space exploration holds great promise, it also presents challenges, including the need for international cooperation, funding, and addressing the environmental impact of space activities.",

"Climate change is driving the need for sustainable agriculture practices. Traditional farming methods often rely on chemical fertilizers and pesticides, which can harm the environment and human health. Sustainable agriculture aims to reduce the negative impact of farming by promoting practices that conserve resources, protect biodiversity, and improve soil health. Techniques such as crop rotation, cover cropping, and organic farming are being adopted by farmers worldwide. Additionally, advances in agricultural technology, such as precision farming and vertical farming, are helping to increase efficiency and reduce waste. By embracing sustainable agriculture, we can ensure food security for future generations while protecting the planet.",

"The rise of electric vehicles (EVs) is transforming the automotive industry. EVs offer a cleaner and more sustainable alternative to traditional gasoline-powered vehicles, with lower emissions and reduced dependence on fossil fuels. Major automakers are investing heavily in EV technology, and the market for electric cars is growing rapidly. Advances in battery technology are improving the range and performance of EVs, making them more practical for everyday use. Governments around the world are also supporting the transition to electric vehicles through incentives, subsidies, and the development of charging infrastructure. While challenges remain, such as the need for widespread charging stations and the environmental impact of battery production, the future of transportation is increasingly electric.",

"Artificial intelligence (AI) is transforming the field of education. AI-powered tools and platforms are being used to personalize learning, automate administrative tasks, and provide real-time feedback to students. Personalized learning systems use AI algorithms to analyze student performance and tailor instruction to individual needs. This approach can help improve student outcomes by addressing learning gaps and providing targeted support. AI is also being used to create adaptive assessments, intelligent tutoring systems, and virtual learning environments. While AI in education offers many benefits, it also raises questions about data privacy, the role of teachers, and the need for equitable access to technology. As AI continues to evolve, it has the potential to revolutionize the way we teach and learn.",

"The field of renewable energy is experiencing significant growth as countries seek to reduce their carbon emissions and transition to cleaner energy sources. Solar, wind, and hydro power are among the most common forms of renewable energy, and they offer a sustainable alternative to fossil fuels. Solar power harnesses energy from the sun using photovoltaic cells, while wind power generates electricity through turbines. Hydropower uses the energy of flowing water to produce electricity. These technologies are being adopted at an increasing rate, driven by advancements in technology, falling costs, and supportive government policies. The growth of renewable energy is not without challenges, including the need for improved energy storage solutions and the integration of these technologies into existing power grids.",

"The COVID-19 pandemic has had a profound impact on the world, affecting nearly every aspect of daily life. The pandemic has led to widespread illness, loss of life, and economic disruption. Healthcare systems have been stretched to their limits, and the need for effective treatments and vaccines has become paramount. Scientists and researchers have worked tirelessly to develop vaccines and treatments for COVID-19, leading to the rapid development and distribution of several effective vaccines. The pandemic has also highlighted the importance of public health measures, such as social distancing, mask-wearing, and hand hygiene. As the world continues to grapple with the pandemic, efforts to prevent future outbreaks and improve global health infrastructure are essential.",

"The concept of smart cities is gaining traction as urban areas look for ways to improve efficiency, sustainability, and quality of life for residents. Smart cities leverage technology and data to optimize city services, such as transportation, energy, and waste management. For example, smart traffic management systems can reduce congestion and improve air quality by adjusting traffic signals in real-time based on traffic flow. Smart grids can enhance energy efficiency by balancing supply and demand and integrating renewable energy sources. Additionally, smart waste management systems use sensors to monitor waste levels and optimize collection routes. While smart cities offer many benefits, they also raise concerns about data privacy, cybersecurity, and the need for equitable access to technology.",

"The field of biotechnology is revolutionizing medicine and agriculture. Advances in genetic engineering have enabled scientists to develop crops that are resistant to pests and diseases, as well as produce higher yields. In medicine, biotechnology is being used to create personalized treatments based on an individual's genetic makeup. This approach, known as precision medicine, aims to provide more effective and targeted therapies for various diseases. However, the rapid pace of biotechnological innovation also raises ethical and regulatory questions. It is crucial to balance the benefits of these technologies with the potential risks and ensure that they are used responsibly.",

"The rise of renewable energy is transforming the global energy landscape. Solar, wind, and hydro power are among the most common forms of renewable energy, and they offer a sustainable alternative to fossil fuels. Solar power harnesses energy from the sun using photovoltaic cells, while wind power generates electricity through turbines. Hydropower uses the energy of flowing water to produce electricity. These technologies are being adopted at an increasing rate as countries seek to reduce their carbon emissions and transition to cleaner energy sources. The growth of renewable energy is not without challenges, including the need for improved energy storage solutions and the integration of these technologies into existing power grids.",

"The field of cybersecurity is becoming increasingly important as our reliance on technology and the internet grows. Cyberattacks can have devastating consequences, including the theft of sensitive information, financial loss, and damage to an organization's reputation. Cybercriminals use various methods, such as phishing, ransomware, and malware, to exploit vulnerabilities in systems. Organizations must implement robust cybersecurity measures to protect their data and infrastructure. This includes using encryption, multi-factor authentication, and regular security audits. Additionally, individuals can take steps to safeguard their personal information, such as using strong passwords and being cautious of suspicious emails. As cyber threats continue to evolve, staying informed and vigilant is essential for maintaining cybersecurity.",

"The rise of e-commerce has transformed the retail industry. Online shopping has become increasingly popular, offering consumers convenience and a wide range of products at their fingertips. Major e-commerce platforms like Amazon, Alibaba, and eBay have disrupted traditional brick-and-mortar stores, leading to significant changes in consumer behavior. The COVID-19 pandemic further accelerated the shift to online shopping, as lockdowns and social distancing measures limited in-person shopping. While e-commerce offers many benefits, it also presents challenges, such as the need for efficient logistics and concerns about data privacy. As the industry continues to evolve, companies are exploring new technologies like augmented reality and artificial intelligence to enhance the online shopping experience.",

"Artificial intelligence (AI) is transforming the field of healthcare. AI-powered tools and platforms are being used to analyze medical images, predict patient outcomes, and assist in surgery. In radiology, AI algorithms can help detect abnormalities in medical images, such as tumors or fractures, with high accuracy. In predictive analytics, AI can analyze patient data to identify individuals at risk of developing certain conditions, allowing for early intervention and personalized treatment plans. AI is also being used in robotic surgery, where it can enhance precision and reduce the risk of complications. While AI in healthcare offers many benefits, it also raises questions about data privacy, the role of healthcare professionals, and the need for regulatory oversight.",

"The field of renewable energy is experiencing significant growth as countries seek to reduce their carbon emissions and transition to cleaner energy sources. Solar, wind, and hydro power are among the most common forms of renewable energy, and they offer a sustainable alternative to fossil fuels. Solar power harnesses energy from the sun using photovoltaic cells, while wind power generates electricity through turbines. Hydropower uses the energy of flowing water to produce electricity. These technologies are being adopted at an increasing rate, driven by advancements in technology, falling costs, and supportive government policies. The growth of renewable energy is not without challenges, including the need for improved energy storage solutions and the integration of these technologies into existing power grids.",

"The COVID-19 pandemic has had a profound impact on the world, affecting nearly every aspect of daily life. The pandemic has led to widespread illness, loss of life, and economic disruption. Healthcare systems have been stretched to their limits, and the need for effective treatments and vaccines has become paramount. Scientists and researchers have worked tirelessly to develop vaccines and treatments for COVID-19, leading to the rapid development and distribution of several effective vaccines. The pandemic has also highlighted the importance of public health measures, such as social distancing, mask-wearing, and hand hygiene. As the world continues to grapple with the pandemic, efforts to prevent future outbreaks and improve global health infrastructure are essential.",

"The concept of smart cities is gaining traction as urban areas look for ways to improve efficiency, sustainability, and quality of life for residents. Smart cities leverage technology and data to optimize city services, such as transportation, energy, and waste management. For example, smart traffic management systems can reduce congestion and improve air quality by adjusting traffic signals in real-time based on traffic flow. Smart grids can enhance energy efficiency by balancing supply and demand and integrating renewable energy sources. Additionally, smart waste management systems use sensors to monitor waste levels and optimize collection routes. While smart cities offer many benefits, they also raise concerns about data privacy, cybersecurity, and the need for equitable access to technology.",

"The field of biotechnology is revolutionizing medicine and agriculture. Advances in genetic engineering have enabled scientists to develop crops that are resistant to pests and diseases, as well as produce higher yields. In medicine, biotechnology is being used to create personalized treatments based on an individual's genetic makeup. This approach, known as precision medicine, aims to provide more effective and targeted therapies for various diseases. However, the rapid pace of biotechnological innovation also raises ethical and regulatory questions. It is crucial to balance the benefits of these technologies with the potential risks and ensure that they are used responsibly.",

"The rise of renewable energy is transforming the global energy landscape. Solar, wind, and hydro power are among the most common forms of renewable energy, and they offer a sustainable alternative to fossil fuels. Solar power harnesses energy from the sun using photovoltaic cells, while wind power generates electricity through turbines. Hydropower uses the energy of flowing water to produce electricity. These technologies are being adopted at an increasing rate as countries seek to reduce their carbon emissions and transition to cleaner energy sources. The growth of renewable energy is not without challenges, including the need for improved energy storage solutions and the integration of these technologies into existing power grids.",

"The field of cybersecurity is becoming increasingly important as our reliance on technology and the internet grows. Cyberattacks can have devastating consequences, including the theft of sensitive information, financial loss, and damage to an organization's reputation. Cybercriminals use various methods, such as phishing, ransomware, and malware, to exploit vulnerabilities in systems. Organizations must implement robust cybersecurity measures to protect their data and infrastructure. This includes using encryption, multi-factor authentication, and regular security audits. Additionally, individuals can take steps to safeguard their personal information, such as using strong passwords and being cautious of suspicious emails. As cyber threats continue to evolve, staying informed and vigilant is essential for maintaining cybersecurity.",

"The rise of e-commerce has transformed the retail industry. Online shopping has become increasingly popular, offering consumers convenience and a wide range of products at their fingertips. Major e-commerce platforms like Amazon, Alibaba, and eBay have disrupted traditional brick-and-mortar stores, leading to significant changes in consumer behavior. The COVID-19 pandemic further accelerated the shift to online shopping, as lockdowns and social distancing measures limited in-person shopping. While e-commerce offers many benefits, it also presents challenges, such as the need for efficient logistics and concerns about data privacy. As the industry continues to evolve, companies are exploring new technologies like augmented reality and artificial intelligence to enhance the online shopping experience.",

"Artificial intelligence (AI) is transforming the field of healthcare. AI-powered tools and platforms are being used to analyze medical images, predict patient outcomes, and assist in surgery. In radiology, AI algorithms can help detect abnormalities in medical images, such as tumors or fractures, with high accuracy. In predictive analytics, AI can analyze patient data to identify individuals at risk of developing certain conditions, allowing for early intervention and personalized treatment plans. AI is also being used in robotic surgery, where it can enhance precision and reduce the risk of complications. While AI in healthcare offers many benefits, it also raises questions about data privacy, the role of healthcare professionals, and the need for regulatory oversight.",

]text = texts[0]

words_to_add = ["example", "test", "random", "insert"]

num_words_to_add = 5

# modified_text = randomly_add_words(text, words_to_add, num_words_to_add)

modified_text = randomly_add_words(watermark_text(text, offset=0), words_to_add, num_words_to_add)

print("Original Text:")

print(text)

print("\nModified Text:")

print(modified_text)

match_ratios = watermark_text_and_calculate_matches(modified_text, max_offset=5)

print(match_ratios)

check_significant_difference(match_ratios)Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Done 66 th word

Done 71 th word

Done 76 th word

Done 81 th word

Done 86 th word

Done 91 th word

Done 96 th word

Original Text:

Artificial intelligence (AI) has seen remarkable advancements in recent years, transforming numerous industries. From healthcare to finance, AI technologies are being leveraged to improve efficiency and decision-making. In healthcare, AI algorithms are being used to analyze medical images, predict patient outcomes, and assist in surgery. Finance professionals are using AI for fraud detection, risk management, and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that AI systems are transparent and fair is critical for their continued adoption and trust. As AI continues to evolve, it is essential to consider both its potential benefits and challenges.

Watermark Areas:

Artificial intelligence (AI) has [MASK] remarkable advancements in recent [MASK] transforming numerous industries. From [MASK] to finance, AI technologies [MASK] being leveraged to improve [MASK] and decision-making. In healthcare, [MASK] algorithms are being used [MASK] analyze medical images, predict [MASK] outcomes, and assist in [MASK] Finance professionals are using [MASK] for fraud detection, risk [MASK] and algorithmic trading. Despite [MASK] advancements, AI also raises [MASK] concerns, particularly regarding bias [MASK] privacy. Ensuring that AI [MASK] are transparent and fair [MASK] critical for their continued [MASK] and trust. As AI [MASK] to evolve, it is [MASK] to consider both its [MASK] benefits and challenges.

Watermarked Text:

Artificial intelligence (AI) has made remarkable advancements in recent years transforming numerous industries. From manufacturing to finance, AI technologies are being leveraged to improve performance and decision-making. In healthcare, ai algorithms are being used to analyze medical images, predict patient outcomes, and assist in how Finance professionals are using them for fraud detection, risk management and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that AI algorithms are transparent and fair is critical for their continued integrity and trust. As AI continues to evolve, it is important to consider both its potential benefits and challenges.

Original Text:

Artificial intelligence (AI) has seen remarkable advancements in recent years, transforming numerous industries. From healthcare to finance, AI technologies are being leveraged to improve efficiency and decision-making. In healthcare, AI algorithms are being used to analyze medical images, predict patient outcomes, and assist in surgery. Finance professionals are using AI for fraud detection, risk management, and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that AI systems are transparent and fair is critical for their continued adoption and trust. As AI continues to evolve, it is essential to consider both its potential benefits and challenges.

Modified Text:

Artificial intelligence (AI) has made remarkable advancements in recent years transforming numerous industries. From manufacturing to finance, AI technologies are being leveraged to improve performance and decision-making. In healthcare, ai algorithms are being used to analyze medical images, predict patient outcomes, random and assist in how Finance professionals are using them for fraud example detection, risk management and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that example AI algorithms are transparent and fair test is critical for their continued integrity and trust. As AI continues to evolve, it is random important to consider both its potential benefits and challenges.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

{0: 0.6190476190476191, 1: 0.3333333333333333, 2: 0.42857142857142855, 3: 0.2857142857142857, 4: 0.55}

Highest Match Ratio: 0.6190476190476191

Average of Other Ratios: 0.3994047619047619

T-Statistic: -3.765894344306259

P-Value: 0.032757613277666235

The highest ratio is significantly different from the others.

[0.6190476190476191,

0.3994047619047619,

-3.765894344306259,

0.032757613277666235]list_of_significance = []

list_of_significance_watermarked = []

count_t = 0

for text in texts:

count_t+=1

print("___________________________________________________________________________________________________________________________")

print("Doing", count_t)

print("___________________________________________________________________________________________________________________________")

words_to_add = ["example", "test", "random", "insert"]

num_words_to_add = 5

# modified_text = randomly_add_words(text, words_to_add, num_words_to_add)

modified_text = randomly_add_words(watermark_text(text, offset=0), words_to_add, num_words_to_add)

# print("Original Text:")

# print(text)

# print("\nModified Text:")

# print(modified_text)

match_ratios = watermark_text_and_calculate_matches(modified_text, max_offset=5)

# print(match_ratios)

list_of_significance_watermarked.append(check_significant_difference(match_ratios))

match_ratios = watermark_text_and_calculate_matches(text, max_offset=5)

list_of_significance.append(check_significant_difference(match_ratios))

print("___________________________________________________________________________________________________________________________")

print("Done", count_t, )

print("___________________________________________________________________________________________________________________________")

___________________________________________________________________________________________________________________________

Doing 1

___________________________________________________________________________________________________________________________

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Done 66 th word

Done 71 th word

Done 76 th word

Done 81 th word

Done 86 th word

Done 91 th word

Done 96 th word

Original Text:

Artificial intelligence (AI) has seen remarkable advancements in recent years, transforming numerous industries. From healthcare to finance, AI technologies are being leveraged to improve efficiency and decision-making. In healthcare, AI algorithms are being used to analyze medical images, predict patient outcomes, and assist in surgery. Finance professionals are using AI for fraud detection, risk management, and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that AI systems are transparent and fair is critical for their continued adoption and trust. As AI continues to evolve, it is essential to consider both its potential benefits and challenges.

Watermark Areas:

Artificial intelligence (AI) has [MASK] remarkable advancements in recent [MASK] transforming numerous industries. From [MASK] to finance, AI technologies [MASK] being leveraged to improve [MASK] and decision-making. In healthcare, [MASK] algorithms are being used [MASK] analyze medical images, predict [MASK] outcomes, and assist in [MASK] Finance professionals are using [MASK] for fraud detection, risk [MASK] and algorithmic trading. Despite [MASK] advancements, AI also raises [MASK] concerns, particularly regarding bias [MASK] privacy. Ensuring that AI [MASK] are transparent and fair [MASK] critical for their continued [MASK] and trust. As AI [MASK] to evolve, it is [MASK] to consider both its [MASK] benefits and challenges.

Watermarked Text:

Artificial intelligence (AI) has made remarkable advancements in recent years transforming numerous industries. From manufacturing to finance, AI technologies are being leveraged to improve performance and decision-making. In healthcare, ai algorithms are being used to analyze medical images, predict patient outcomes, and assist in how Finance professionals are using them for fraud detection, risk management and algorithmic trading. Despite these advancements, AI also raises ethical concerns, particularly regarding bias and privacy. Ensuring that AI algorithms are transparent and fair is critical for their continued integrity and trust. As AI continues to evolve, it is important to consider both its potential benefits and challenges.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.5238095238095238

Average of Other Ratios: 0.4339285714285714

T-Statistic: -16.777777777777764

P-Value: 0.0004610425326555902

The highest ratio is significantly different from the others.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.5789473684210527

Average of Other Ratios: 0.4375

T-Statistic: -11.315789473684214

P-Value: 0.0014802633181549077

The highest ratio is significantly different from the others.

___________________________________________________________________________________________________________________________

Done 1

___________________________________________________________________________________________________________________________

___________________________________________________________________________________________________________________________

Doing 2

___________________________________________________________________________________________________________________________

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Done 66 th word

Done 71 th word

Done 76 th word

Done 81 th word

Done 86 th word

Done 91 th word

Original Text:

Climate change is one of the most pressing issues facing our planet today. Rising global temperatures, melting ice caps, and increasing frequency of extreme weather events are all indicators of this phenomenon. Scientists warn that without significant action to reduce greenhouse gas emissions, the effects of climate change will become more severe. Renewable energy sources such as solar, wind, and hydro power are being promoted as sustainable alternatives to fossil fuels. Additionally, individuals can make a difference by reducing their carbon footprint through actions like using public transportation, conserving energy, and supporting policies aimed at environmental protection.

Watermark Areas:

Climate change is one [MASK] the most pressing issues [MASK] our planet today. Rising [MASK] temperatures, melting ice caps, [MASK] increasing frequency of extreme [MASK] events are all indicators [MASK] this phenomenon. Scientists warn [MASK] without significant action to [MASK] greenhouse gas emissions, the [MASK] of climate change will [MASK] more severe. Renewable energy [MASK] such as solar, wind, [MASK] hydro power are being [MASK] as sustainable alternatives to [MASK] fuels. Additionally, individuals can [MASK] a difference by reducing [MASK] carbon footprint through actions [MASK] using public transportation, conserving [MASK] and supporting policies aimed [MASK] environmental protection.

Watermarked Text:

Climate change is one of the most pressing issues facing our planet today. Rising global temperatures, melting ice caps, and increasing frequency of extreme weather events are all indicators of this phenomenon. Scientists warn people without significant action to reduce greenhouse gas emissions, the consequences of climate change will be more severe. Renewable energy sources such as solar, wind, and hydro power are being considered as sustainable alternatives to fossil fuels. Additionally, individuals can make a difference by reducing their carbon footprint through actions like using public transportation, conserving water and supporting policies aimed at environmental protection.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.6

Average of Other Ratios: 0.4875

T-Statistic: -6.825454528992923

P-Value: 0.006434193533715181

The highest ratio is significantly different from the others.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.7368421052631579

Average of Other Ratios: 0.4992690058479532

T-Statistic: -4.1686084498315

P-Value: 0.02512588315462554

The highest ratio is significantly different from the others.

___________________________________________________________________________________________________________________________

Done 2

___________________________________________________________________________________________________________________________

___________________________________________________________________________________________________________________________

Doing 3

___________________________________________________________________________________________________________________________

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Done 66 th word

Done 71 th word

Done 76 th word

Done 81 th word

Done 86 th word

Done 91 th word

Original Text:

The field of biotechnology is revolutionizing medicine and agriculture. Advances in genetic engineering have enabled scientists to develop crops that are resistant to pests and diseases, as well as produce higher yields. In medicine, biotechnology is being used to create personalized treatments based on an individual's genetic makeup. This approach, known as precision medicine, aims to provide more effective and targeted therapies for various diseases. However, the rapid pace of biotechnological innovation also raises ethical and regulatory questions. It is crucial to balance the benefits of these technologies with the potential risks and ensure that they are used responsibly.

Watermark Areas:

The field of biotechnology [MASK] revolutionizing medicine and agriculture. [MASK] in genetic engineering have [MASK] scientists to develop crops [MASK] are resistant to pests [MASK] diseases, as well as [MASK] higher yields. In medicine, [MASK] is being used to [MASK] personalized treatments based on [MASK] individual's genetic makeup. This [MASK] known as precision medicine, [MASK] to provide more effective [MASK] targeted therapies for various [MASK] However, the rapid pace [MASK] biotechnological innovation also raises [MASK] and regulatory questions. It [MASK] crucial to balance the [MASK] of these technologies with [MASK] potential risks and ensure [MASK] they are used responsibly.

Watermarked Text:

The field of biotechnology is revolutionizing medicine and agriculture. advances in genetic engineering have allowed scientists to develop crops that are resistant to pests and diseases, as well as achieve higher yields. In medicine, biotechnology is being used to develop personalized treatments based on an individual's genetic makeup. This is known as precision medicine, designed to provide more effective and targeted therapies for various diseases However, the rapid pace of biotechnological innovation also raises ethical and regulatory questions. It is crucial to balance the benefits of these technologies with the potential risks and ensure that they are used responsibly.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

C:\Users\rrath\.conda\envs\py310\lib\site-packages\scipy\stats\_axis_nan_policy.py:523: RuntimeWarning: Precision loss occurred in moment calculation due to catastrophic cancellation. This occurs when the data are nearly identical. Results may be unreliable.

res = hypotest_fun_out(*samples, **kwds)

Highest Match Ratio: 0.55

Average of Other Ratios: 0.5

T-Statistic: -inf

P-Value: 0.0

The highest ratio is significantly different from the others.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.631578947368421

Average of Other Ratios: 0.47368421052631576

T-Statistic: -2.5980762113533156

P-Value: 0.12168993434632014

The highest ratio is not significantly different from the others.

___________________________________________________________________________________________________________________________

Done 3

___________________________________________________________________________________________________________________________

___________________________________________________________________________________________________________________________

Doing 4

___________________________________________________________________________________________________________________________

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Done 66 th word

Done 71 th word

Done 76 th word

Done 81 th word

Done 86 th word

Done 91 th word

Original Text:

Quantum computing is poised to revolutionize the world of computing. Unlike classical computers, which use bits to represent data as 0s and 1s, quantum computers use qubits, which can exist in multiple states simultaneously. This allows quantum computers to perform complex calculations much faster than their classical counterparts. Potential applications of quantum computing include cryptography, drug discovery, and optimization problems. However, building a practical and scalable quantum computer remains a significant challenge. Researchers are exploring various approaches, such as superconducting qubits and trapped ions, to overcome these hurdles and bring quantum computing closer to reality.

Watermark Areas:

Quantum computing is poised [MASK] revolutionize the world of [MASK] Unlike classical computers, which [MASK] bits to represent data [MASK] 0s and 1s, quantum [MASK] use qubits, which can [MASK] in multiple states simultaneously. [MASK] allows quantum computers to [MASK] complex calculations much faster [MASK] their classical counterparts. Potential [MASK] of quantum computing include [MASK] drug discovery, and optimization [MASK] However, building a practical [MASK] scalable quantum computer remains [MASK] significant challenge. Researchers are [MASK] various approaches, such as [MASK] qubits and trapped ions, [MASK] overcome these hurdles and [MASK] quantum computing closer to [MASK]

Watermarked Text:

Quantum computing is poised to revolutionize the world of computing Unlike classical computers, which use bits to represent data between 0s and 1s, quantum computers use qubits, which can exist in multiple states simultaneously. this allows quantum computers to perform complex calculations much faster than their classical counterparts. Potential applications of quantum computing include : drug discovery, and optimization . However, building a practical and scalable quantum computer remains a significant challenge. Researchers are exploring various approaches, such as trapped qubits and trapped ions, to overcome these hurdles and bring quantum computing closer to .

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.5789473684210527

Average of Other Ratios: 0.46578947368421053

T-Statistic: -14.333333333333357

P-Value: 0.004832243042167172

The highest ratio is significantly different from the others.

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Highest Match Ratio: 0.6666666666666666

Average of Other Ratios: 0.5051169590643274

T-Statistic: -3.25528426992502

P-Value: 0.047299956469803

The highest ratio is significantly different from the others.

___________________________________________________________________________________________________________________________

Done 4

___________________________________________________________________________________________________________________________

___________________________________________________________________________________________________________________________

Doing 5

___________________________________________________________________________________________________________________________

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForMaskedLM: ['bert.pooler.dense.bias', 'bert.pooler.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight']

- This IS expected if you are initializing BertForMaskedLM from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForMaskedLM from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

Done 1 th word

Done 6 th word

Done 11 th word

Done 16 th word

Done 21 th word

Done 26 th word

Done 31 th word

Done 36 th word

Done 41 th word

Done 46 th word

Done 51 th word

Done 56 th word

Done 61 th word

Done 66 th word

Done 71 th word

Done 76 th word

Done 81 th word

Done 86 th word

Done 91 th word

Done 96 th word

Done 101 th word

Done 106 th word

Original Text: